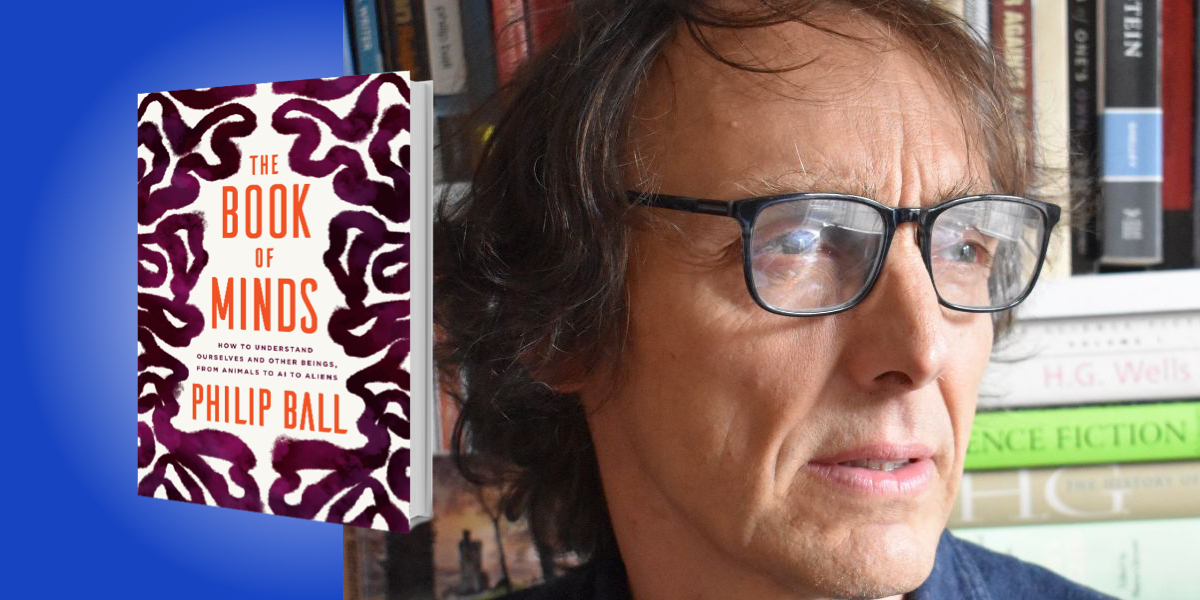

Philip Ball is a science writer and broadcaster, and was an editor at Nature for more than twenty years. His book Critical Mass won the 2005 Aventis Prize for Science Books. Ball is also a presenter of Science Stories, the BBC Radio 4 series on the history of science. He trained as a chemist at the University of Oxford and as a physicist at the University of Bristol.

Below, Philip shares 5 key insights from his new book, The Book of Minds: How to Understand Ourselves and Other Beings, from Animals to AI to Aliens. Listen to the audio version—read by Philip himself—in the Next Big Idea App.

1. There is a space of possible minds.

In 1984, the computer scientist Aaron Sloman published a paper arguing that it was time to rethink the concept of the mind, in the light of new information on human and animal cognition, as well as AI. Sloman’s paper was titled “The structure of the space of possible minds.” He wrote that:

Clearly there is not just one sort of mind. Besides obvious individual differences between adults, there are differences between adults, children of various ages and infants. There are cross-cultural differences. There are also differences between humans, chimpanzees, dogs, mice and other animals. And there are differences between all those and machines.

He argued that minds exist not along a line that measures something like intelligence, but in a multi-dimensional richly structured space. When it comes to the cartography of minds, we don’t yet know what the coordinates are, but some researchers have speculated about what the map might look like.

Perhaps there’s a dimension that measures “intelligence,” meaning how much information-processing the mind can do to turn a stimulus into a response. Put that way, computers have plenty of intelligence. And maybe there’s a dimension that measures consciousness, awareness, or experience—how much inner life the mind has. No computer system has that, but we surely do. In all probability, so do some animals. Babies may have more limited intelligence than adults, but no less experience. Maybe there’s a dimension that measures memory, and another that measures purpose or intentionality. Perhaps it’s too simple to suppose that intelligence is a single thing. Even humans differ in types of intelligence: some people have great spatial awareness, others are brilliant at math, or have social intelligence.

Any individual sits at a location somewhere in this space of minds. Humans are clustered into a cloud, and perhaps nearby there’s another cluster for chimpanzees, and then scattered further and wider there are different sorts of bird minds, and octopus minds, and so on. And then we can ask: what lies right over here, or there? What sorts of minds are possible?

“Perhaps it’s too simple to suppose that intelligence is a single thing.”

2. Minds exist to free us from genetically hard-wired behavior.

All minds (we know of) have evolved by natural selection. They are related through branches in the evolutionary tree of life. Each has been shaped by the demands of its environment. Dolphins use echolocation because that can be more useful than vision underwater. Some birds have minds that can sense the Earth’s magnetic field.

All of these natural minds help the mind-bearer survive and reproduce, by generating some kind of adaptive behavior. These minds respond to the environment with behavior that will benefit the creature—in effect, the mind makes a prediction about the best thing to do in the circumstances.

This view of the mind as a predictor suggests how it differs from a machine-like stimulus-response system. The simplest organisms, like bacteria, often show behavior that is hard-wired: a limited repertoire of actions is generated reliably and predictably by stimuli from the environment. But human and animal minds, are not stimulus-response circuits for creating actions. They are the alternative to automaton-like behavior.

In other words, minds exist to free us from our genetic hard-wiring by allowing actions that aren’t pre-programmed. The amazing thing about humans is not that our genes affect how we make choices, but that so much of our behavior escapes their dominating influence. Complex minds have a vastly expanded behavioral repertoire that can be fine-tuned and improvised to new circumstances. This makes evolutionary sense because if it’s optimal for survival for a creature to have lots of behavioral options, then evolution can hardwire a response to every foreseeable circumstance, or (more efficiently) it can build that creature a mind.

3. Everything alive might have a kind of mind.

You have a mind, as do I. You probably accept that some animals have minds—gorillas, dogs, and even in their imperious way, cats. But what about an ant? An ant has a tiny brain, and can show quite complex behavior. Perhaps you might agree that all animals have minds.

“The Venus fly-trap, which traps and digests insects, can count.”

But how about a plant? Plants don’t have brains or nerves, so how could they think? Plant cells pass electrical signals between them, a little like neurons do. Plants can move, respond to stimuli, and learn. The Venus fly-trap, which traps and digests insects, can count; it counts the number of movements it senses from an insect that has landed on it, so that it doesn’t waste energy consuming a stray piece of dust. Time-lapse videos of plant roots or tendrils of a climbing plant look weirdly like the behavior of an intelligent creature, sensitive to touch.

Some biologists think we should talk of plant minds. Some go further, arguing that every living entity, right down to single-celled bacteria, behaves in a cognitive way—there is some kind of decision-making going on. Does this entail a mind? Does a bacterium or a fungus or plant have anything like sentience?

We still don’t have a full scientific understanding of what sentience or consciousness is, but some argue that as soon as a cell opens its membrane so that things outside of it affect the internal state of the cell, then that generates a flicker of “proto-feeling.” Brains, minds, and cognition are, in this view, an aggregate of atoms of sentience. This is a position called biopsychism, which supposes that all organisms have minds of sorts by virtue of being alive.

4. Artificial general intelligence might not exist.

When research on artificial intelligence created in computer systems began in the 1950s, some researchers believed we would have “fully intelligent machines” within a few decades. That over-optimism seems to have stemmed from a woefully inadequate view of what human-like intelligence and cognition are: as if the highest expression of the human mind is an ability to play chess.

One of the goals of AI today is creating machines that do all of the things a humans can do: a capability called artificial general intelligence, or AGI. But today’s AI finds easy what we find hard (like complex calculations), and vice versa. AI lacks common sense, struggling to do things toddlers can do without forethought. Common sense is largely an adaptive, intention-driven form of behavior motivated and informed by our internal representations of reality: by intuitive physics and intuitive psychology. These aren’t things that will appear in AI if we make the circuits bigger and give them more processing power. If we want machines to exhibit common sense, we would have to put that in by hand.

“AI lacks common sense, struggling to do things toddlers can do without forethought.”

But looked at within the space of possible minds, AGI seems curiously arbitrary. We are just one location in this mind-space, and there’s no particular reason to regard it as special. Why steer AI there? Why give AI all our foibles and limitations? Can we even be sure that an artificial mind could do all that we do if it lacks consciousness? There are probably many other kinds of minds AI could have. Even if it could mimic ours, that wouldn’t imply that the same kind of mind will underlie that behavior. Maybe AGI is just a pointless fantasy.

5. Free will is real.

If minds didn’t make decisions, they would have no point. Evolution might as well produce a mindless machine-like system that responds like a puppet to its environment. What would be the point of a consciousness that watches helplessly as it responds like an automaton?

Yet the debate about whether free will exists has raged for centuries. Some scientists insist that it can’t exist, because everything that happens follows inexorably from the exact state of the universe just a moment before, impelled by blind forces between atoms. In the operation of those physical laws, what space remains for free will?

What those arguments overlook is the question of causation. Just because any event can be dismantled into the atoms and fundamental forces involved doesn’t mean that those atoms and forces were the cause. There is now clear evidence that in some complex systems causation is not all bottom-up: the main causal influence can emerge at higher levels of organization. And this is exactly what we intuit anyways: no one imagines that the real author of The Great Gatsby was the atoms of F. Scott Fitzgerald’s brain.

To believe in free will, recognize that our actions and decisions are determined by the volitional decision-making neural circuits of our brains. They, and not their component atoms, are the real cause of what we do.

To listen to the audio version read by author Philip Ball, download the Next Big Idea App today: