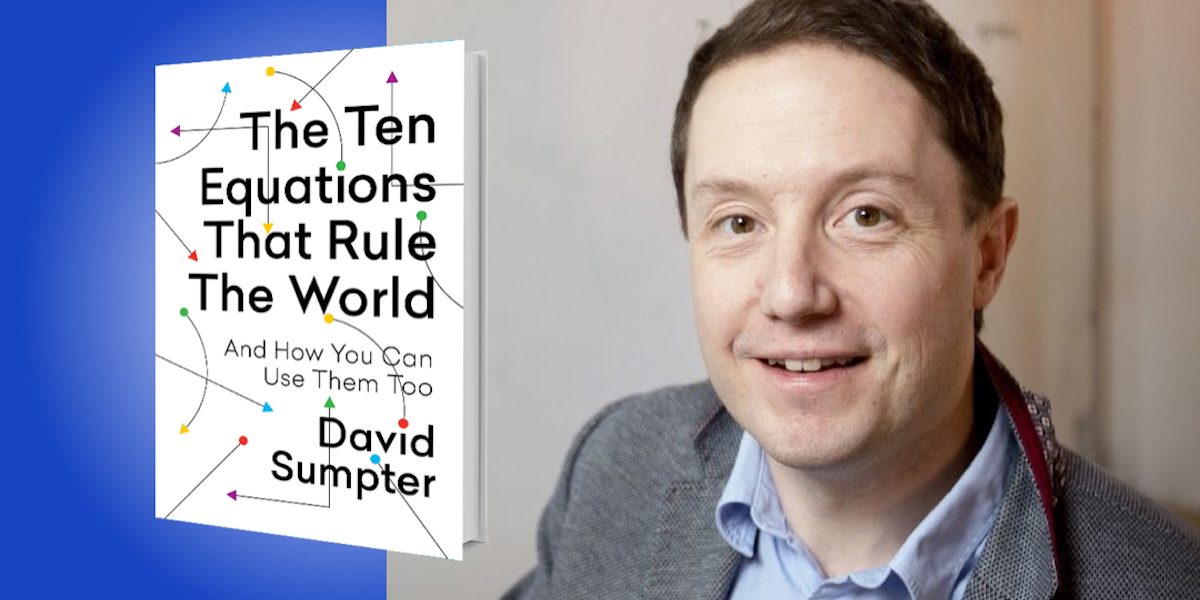

David Sumpter is Professor of Applied Mathematics at the University of Uppsala, Sweden, and the author of Soccermatics and Outnumbered. His scientific research ranges from ecology and biology to political science and sociology, and he has been an international consultant in government, artificial intelligence, sports, gambling, and finance.

Below, David shares 5 key insights from his new book, The Ten Equations That Rule the World: And How You Can Use Them Too. Listen to the audio version—read by David himself—in the Next Big Idea App.

1. Every problem can be broken down into three components: data, model, and nonsense.

Data is what you observe—it is your social media feed, your friends’ behavior, the things your colleagues at work do and say. Data doesn’t have to be numbers, and it can be subjective, but it has to come from what you see, hear, and read about in the world.

A model is how we reason about the data. How can we decide whether or not a person who makes a nasty comment is an idiot, or just a nice person having a bad day? How can we know if a four star-rated hotel is worth booking? How can we see our own successes and failures more clearly in relation to others’? Answering these questions starts by first thinking about whether we have the correct model—the correct way of seeing—the world. This is where equations come in, which help us improve our models of the world. They help us see why even nice people sometimes do bad things. They help us lift the filter that social media creates when we view the world. Models, when combined with data, bring clarity.

Then there is nonsense. We should read the word literally: “non-sense.” These are thoughts that are neither data (they don’t come from our senses) nor models (they do not help us reason about the data). We all have nonsense thoughts—when we daydream, when we listen to our favorite song, when we think about those around us. We don’t want to lose that nonsense, but we do want to understand it for what it is.

“We don’t want to lose that nonsense, but we do want to understand it for what it is.”

2. The numbers are in: We should be more forgiving.

Imagine a situation where someone has let you down or treated you badly. You want to work out whether they are an idiot who does nasty things because they enjoy it, or a nice person who has simply made a mistake. Start by thinking of the proportion of idiots and nice people in the world. (This is your model.) Let’s say you think that 95% of people are nice, so only 5% are idiots. And let’s also say that idiots do nasty things 50% of the time, and that even nice people make mistakes 10% of the time.

Now comes the data. Someone you have just met has let you down. Maybe they said something behind your back, or seemed to deliberately ignore you. The data is the person’s actions. The judgement equation—which originated more than 250 years ago from the work of an English reverend named Bayes—allows you to work out which model, the “idiot” model or the “nice” model, is correct.

It works like this: If there are 95 people (95% of 100) who are nice, then since 10% of these can make a mistake, then we might expect 9.5 of them to let you down. Of the 5% who are nasty, we expect 50%, or 2.5, to be deliberately nasty. Comparing 9.5 to 2.5 lets you see that, even after someone lets you down, the probability they are an idiot is only 2.5 divided by 12 (9.5 + 2.5). So, there’s only about a 20% chance that they are truly an idiot.

The numbers we use here are subjective, but the reasoning isn’t. Whatever your outlook, math says that you should almost always forgive others (the first few times at least). You can apply the same logic to worrying (or not) about an airplane crash, and even thinking about if your teenage kids are likely to become depressed from using their phones too much (the science says they are going to be fine). In short, the judgement equation, the rule that Bayes invented, usually tells us to give each other another chance.

“Whatever your outlook, math says that you should almost always forgive others (the first few times at least).”

3. Statistical correctness doesn’t care about your feelings.

We have all heard about being politically correct, but my idea, statistical correctness, is different. It starts in the data.

My colleague, Moa Bursell, spent two years applying for jobs in Sweden. In total, she applied for over two thousand different positions in computing, as an accountant, in teaching, as a driver, and as a nurse. But she wasn’t looking for a job—she was testing the biases of the employers she was writing to. For each application, Moa created two separate CVs and cover letters, both detailing similar work experience and qualifications. Once she had finished the applications, she randomly assigned a name to each of the CVs. The first name sounded Swedish, like Jonas Söderström; the second sounded non-Swedish, such as Kamal Ahmadi.

She found that the Swedish-sounding group was twice as likely to get a callback to a job interview than the Muslim- or African-sounding names. This is the statistically correct reality for immigrants in Sweden: It is more difficult for them to find jobs.

In another experiment, Katrin Auspurg presented people with a series of short descriptions of the age, gender, length of service, and role at work for a hypothetical person. She then asked whether the specified salary was fair. On average, the respondents, both male and female, believed that the women in the scenarios should be paid 92 cents for every dollar paid to a man for doing the same job. At the same time, the vast majority of the respondents, when asked the question directly, agreed that men and women should be paid the same.

We hear a lot of nonsense talked about gender, race, and sexuality. But experiments like these aren’t about opinions—they are about whether or not discrimination is really there. Not all discrimination can be resolved by data, but by starting with a view that is statistically correct, many common pitfalls can be avoided.

“This is the statistically correct reality for immigrants in Sweden: It is more difficult for them to find jobs.”

4. Think like an ant.

Many ant species leave pheromones, or chemical markers, to show their nest-mates where they have been. When they find a sugary food lying on the ground, they deposit their pheromone, and the other ants follow this pheromone to find the food. These chemical markers communicate how much reward an ant is getting from the food, and as a result, ants can track the quality of many different food sources.

We humans can do something similar. Let’s take an example that anyone who has lived through the pandemic is familiar with: binge-watching Netflix. The first episode of a Netflix series always seems good. But as the series goes on, the quality can dip. So I propose using a model, the “reward equation,” to decide when to keep watching and when to quit.

To use the equation, we keep track of a quality score. Let’s say that the first episode is a 9 out of 10, and then the second is a 6. We put these two values in the reward equation, and rounding up, we get a new score: 8. If the next episode is a 7, then the quality score will remain at 8 (because I round up). Now here is the key: If the series quality drops to 7 or below, then I stop watching and find something else.

The reward equation is used by media companies to measure our engagement. They monitor what we do, and then they decide if we should have more of it. But by using it yourself, you can stop yourself from getting sucked into things you’re not really interested in.

“You can stop yourself from getting sucked into things you’re not really interested in.”

5. There are ten equations that rule the world.

There is a small group of mathematicians and engineers who have used equations to transform social media, to make massive profits in gambling and finance, and to design new technologies in artificial intelligence. I call this group “TEN” after the equations they need to know, the equations I write about in my book.

How do I know about TEN? The answer is simple: I am a member. I have been involved in its inner workings for twenty years, and I have experienced firsthand the rewards that access to its code can bring. I have solved scientific problems in fields ranging from ecology and biology to political science and sociology. I have been a consultant on issues regarding the government, artificial intelligence, sports, gambling, and finance.

Membership in this club has brought me into contact with many fascinating people, like the data scientists who work with sports stars, and the technical experts employed by Google, Facebook, Snapchat, and Cambridge Analytica, who control social media and are building our future artificial intelligence. People like Marius and Jan, young professional gamblers who have found an edge on the Asian betting markets. People like Mark, whose microsecond calculations skim profits from small inefficiencies in share prices. I have witnessed firsthand how researchers like Moa Bursell use equations to detect discrimination, understand our political debates, and make the world a better place.

The consequences of allowing our world to be run by mathematics are far-reaching. They are partly good and partly bad—but they are undeniable.

To listen to the audio version read by David Sumpter, download the Next Big Idea App today: