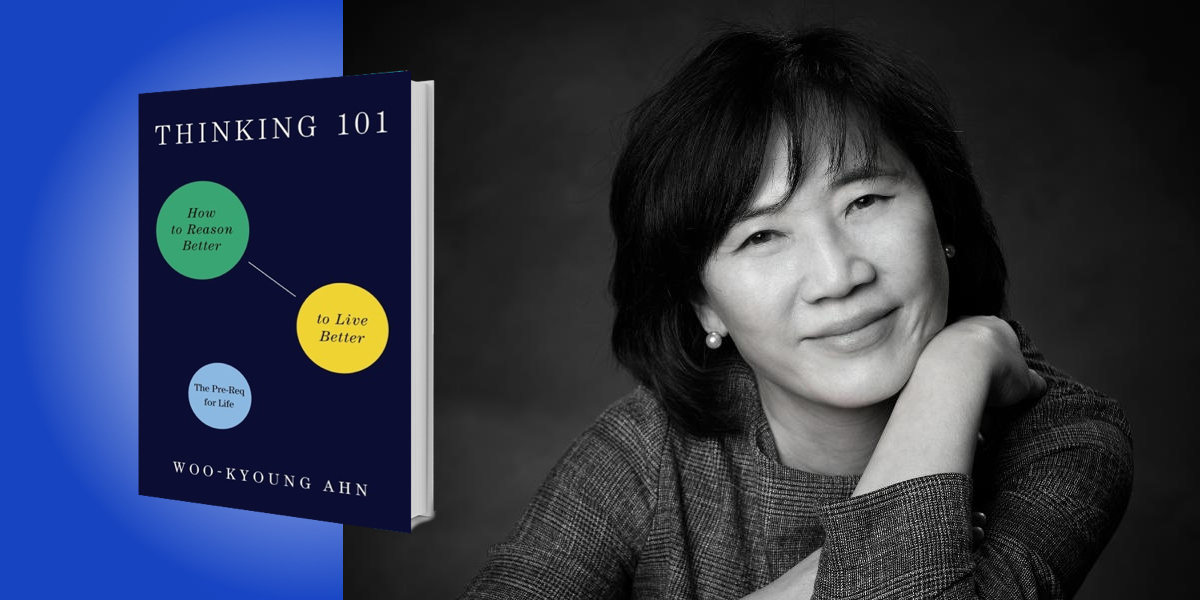

Woo-Kyoung Ahn is the John Hay Whitney Professor of Psychology at Yale University. After receiving her Ph.D. in psychology from the University of Illinois, Urbana-Champaign, she was assistant professor at Yale University and associate professor at Vanderbilt University. In 2022, she received Yale’s Lex Hixon Prize for teaching excellence in the social sciences. Her research on thinking biases has been funded by the National Institutes of Health, and she is a fellow of the American Psychological Association and the Association for Psychological Science.

Below, Woo-kyoung shares 5 key insights from her new book, Thinking 101: How to Reason Better to Live Better. Listen to the audio version—read by Woo-kyoung herself—in the Next Big Idea App.

1. Thinking errors can happen even when we mean well.

Let’s start with an error called confirmation bias. It is the tendency to confirm what one already believes. Suppose a person thinks that echinacea cures the common cold, so he takes echinacea whenever he feels a sore throat, and he usually gets better within two or three days. The evidence he has gathered strongly supports his hypothesis, so it seems rational for him to keep believing that echinacea cures the common cold.

But a critical piece of data is missing: what would happen if he does not take echinacea the next time he feels a sore throat? If he still gets better in a couple of days, his recovery likely has nothing to do with echinacea. So, if this person never tests what would happen if he didn’t take echinacea, then he is committing confirmation bias.

That is exactly how the medical malpractice of bloodletting persisted in western society for 2,000 years. The healers wanted to cure sick people, and every time someone got sick, they let out their bad blood. Most people got better, but they didn’t test what would happen without bloodletting. Confirmation bias can happen with the best of intentions.

Another thinking error is the planning fallacy. It is when we underestimate how much time, money, and effort it takes to complete a task. Almost everybody is guilty of this. There are examples of truly spectacular planning fallacies. Boston’s Big Dig highway construction project went 19 billion dollars over budget and took ten years longer than planned. The Sydney Opera House was initially budgeted at $7 million, but ended up costing $102 million, and it took ten years longer to build than originally estimated to complete a scaled-down version.

What is remarkable about the planning fallacy is that it happens even when it is in our best interest to make the most accurate estimate. Nobody wants to spend extra money and time because of poor planning, and it is obviously very stressful to miss deadlines and budget targets.

2. Thinking errors are by-products of adaptive cognitive systems.

Let’s start with an analogy. We evolved to crave high-calorie foods, which is wonderful for survival when resources are scarce. However, the same drive to eat high-calorie foods can make us overweight. Many thinking errors happen because our cognitive systems evolved to solve problems that help in survival, but the same mechanism backfires under other circumstances.

“Metacognition is an essential part of survival, as it prevents us from attempting something that we cannot do, like trying to fly.”

To illustrate this, let’s talk about metacognition. This is knowing whether you know something, such as knowing whether you can swim. Even if you have not gone swimming for years, you can run a mental simulation of swimming. If it feels fluent in your head, that means you can swim. Metacognition is an essential part of survival, as it prevents us from attempting something that we cannot do, like trying to fly. Usually, things that feel easy in our minds are easy to do.

However, when we rely on fluency for metacognition, it contributes to the planning fallacy. We plan by running a mental simulation of a task, and this typically runs more fluently in our minds than it would in reality. Suppose you are planning holiday shopping, and you come up with a list of people for whom you would like to buy gifts. Once you come up with the list of recipients and gifts, it makes the whole task feel like it will run smoothly. You just need to buy the gifts, but that causes you to underestimate how long it takes to complete the task. Ironically, studies have shown that the more detailed and specific a plan is, the more feasible it looks, causing people to underestimate the completion time even more.

Another example of how thinking errors are byproducts of adaptive cognitive systems is seen with confirmation bias. Suppose you go to a supermarket two miles away from your house, and you find that their apples are good. Next time you want apples, you could try a different supermarket to avoid confirmation bias, but you might as well go back to the first supermarket where the apples were satisfactory. It’s more efficient and less risky, even though it is a type of confirmation bias. When the goal is to get by with things that are good enough, it’s better to continue with what you know rather than explore uncertain possibilities. Confirmation bias is a byproduct of our need to save energy and lessen risk.

3. Thinking errors are prevalent and can have devastating consequences.

Let’s start with a benign example of my own confirmation bias. When my son was four years old, he asked me why a yellow traffic light is called a yellow light. I patiently told him that it was called a yellow light because it was yellow. Then he told me it was orange. As I wondered whether my husband neglected to tell me that he was color blind, I told my son, no way. He insisted, so I looked at it again and it was orange. Up until that point, I had been seeing it as yellow because everybody called it yellow. Next time you see a traffic light, take an objective look yourself.

Seeing a yellow light as orange doesn’t hurt anybody, so long as I prepare to stop. We always interpret the world based on what we already know. Otherwise, we cannot make any sense of it. But this adaptive cognitive mechanism also causes prejudice and stereotypes to persist in society.

“Framing effect can be a matter of life or death.”

In one study, researchers examined the gender pay gap. The participants were science professors at prestigious universities, and they were asked to rate a candidate for a laboratory manager position. All the professors in this study were presented with the same resume, except that on half of them the applicant’s name was Jennifer, and on the other half, it was John. Even though Jennifer and John’s credentials were identical, they rated John to be significantly more competent and hirable, and the average salary that they offered for John was about $3,500 or 13 percent higher than for Jennifer.

Such bias is particularly devastating because of a vicious cycle. When John gets hired, he’ll probably do a good job, so these professors will continue to believe that men are better than women in science—confirmation bias. The same thing can happen with other types of prejudice involving race, ethnicity, sexual orientation, you name it.

Another example of how thinking errors can have significantly negative consequences is seen in the framing effect. People are affected by the way options are framed: 85 percent lean ground beef tastes leaner, healthier, and better than ground beef with 15 percent pure fat. And framing effect can be a matter of life or death. In one study, when patients with lung cancer were told they had a 90 percent chance of surviving if they underwent surgery, a large majority opted for the operation. But when another group of patients was told they had a 10 percent chance of dying after the surgery, only half of them chose the surgery.

4. There are ways to reduce thinking errors.

Before we talk about how to reduce thinking errors, let me explain what does not work. Merely learning about these errors and spotting them is not sufficient. It’s like insomnia. Anyone who suffers from insomnia knows that they can’t sleep, but insomnia cannot be fixed by simply thinking, I should sleep more.

One solution is taking advantage of thinking fallacies. For instance, since we are affected by the way questions are framed, we should ask the same question in two different frames. For example, a study showed that when people were asked how unhappy they were with their social life, they judged themselves to be less happy than when they were asked how happy they were. To avoid being affected only by one frame, ask both.

“Merely learning about these errors and spotting them is not sufficient.”

Similarly, we can avoid confirmation bias by exploiting our tendency to confirm the hypothesis. We just need to confirm two versions of the same hypothesis. Suppose a person believes that men are good at science, and this person has collected plenty of evidence confirming this. Afterward, the person should test the other side of the same hypothesis, meaning whether women are bad scientists. The person should check what happens if women are given an opportunity to become scientists. The person would find that women can be good scientists when given an opportunity, and that evidence disconfirms the original hypothesis.

5. Trying to be perfectly rational is irrational.

To make the most rational and optimal choices, we must search for as many options as possible. Individuals vary widely in how much they maximize the kinds of searches they need to make throughout their lives. Some people are always on the lookout for a better job, even when they are satisfied with their current one, or fantasize about living a different kind of life, or write several drafts of even the simplest letters and emails. On the other hand, others do not experience much difficulty shopping for a gift, easily settle for what is satisfactory, or don’t agree that one must extensively test a relationship before committing to it. Studies have found that those who maximize their search tend to make more money but are less happy than those who don’t. When people don’t obsess over a perfect solution, they can settle down and enjoy what is good enough.

Recently, grit and a growth mindset have been mentioned as important virtues. I agree, but sometimes too much self-regulating can hurt us. In one study, participants varying in their desire for self-control were presented with the heinous task of typing statements written in a foreign language, with the additional challenge of skipping the letter “e” and not using the spacebar. Those with a high desire for self-control performed worse because they quickly realized the gap between what the perfection they strive for and their actual performance. When their goal appears unreachable because a task is so hard, they put in less effort and give up rather than try their best.

I encourage everyone to spend some time learning about thinking errors, talking about how we can do better, and not trying to be perfect when it’s fine to be good enough.

To listen to the audio version read by author Woo-kyoung Ahn, download the Next Big Idea App today: